GPT Image 2 Image-to-Image Troubleshooting: Fix Composition, Lighting, and Details

GPT Image 2 Team

10 мая 2026 г.

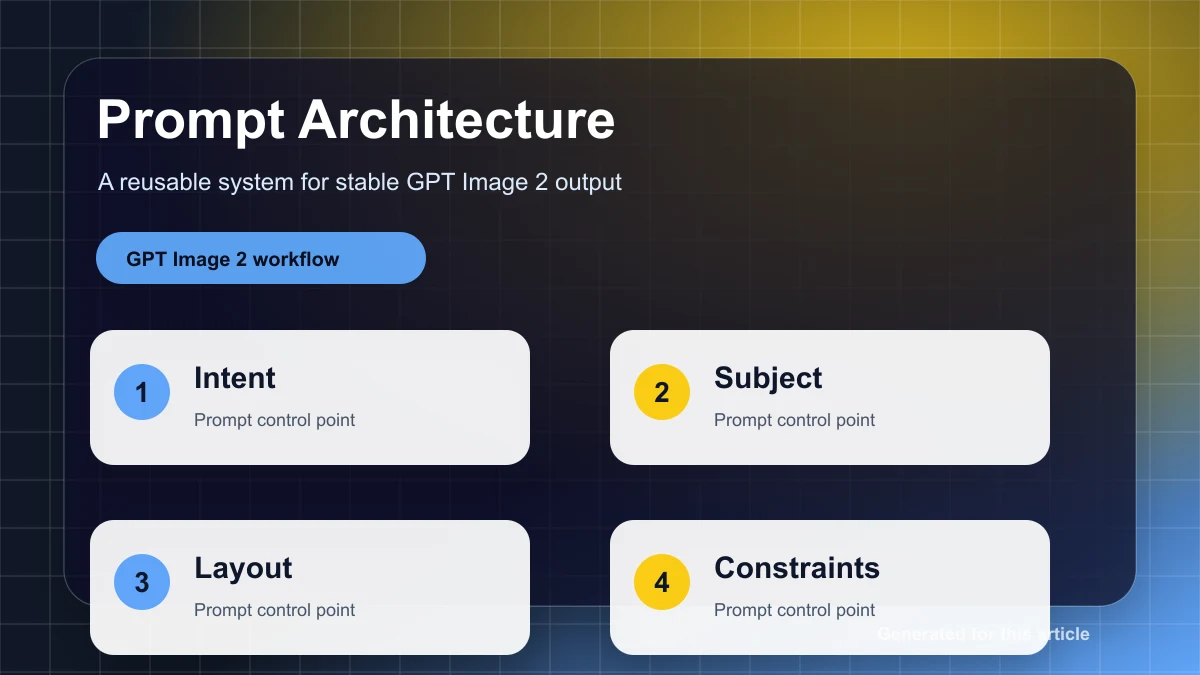

A practical image-to-image troubleshooting guide for GPT Image 2 and diffusion workflows: diagnose composition drift, lighting mismatch, face and hand errors, mask spillover, blurry texture, and edge artifacts.

Image-to-image editing usually fails in predictable ways. The subject gets cropped. A hand grows extra fingers. The new object looks pasted on. A masked edit changes the whole face. The output gets darker after every pass. The tempting reaction is to rerun the same prompt, add words like "realistic" or "high quality," or increase steps. That is not troubleshooting. That is gambling with more compute.

The practical rule is simple: fix structure first, then lighting, then details. Composition errors are geometry problems. Lighting errors are compositing problems. Detail errors are usually local repair problems. Treating all three as prompt wording problems leads to unstable results.

This guide is written for GPT Image 2 users, but the framework also applies to Stable Diffusion, Diffusers, ComfyUI, WebUI, and other diffusion-based image-to-image pipelines. The main difference is the control surface. GPT Image 2 exposes higher-level controls such as prompt, input image, mask, size, quality, output format, compression, and background. Traditional diffusion workflows often expose strength or denoise, CFG or guidance scale, steps, sampler, scheduler, seed, ControlNet, IP-Adapter, and stricter inpaint mask behavior.

That difference matters. GPT Image 2 is often strong when you describe an edit clearly and provide the right input images. It is not the best tool when you need a Photoshop-like hard mask that preserves every unmasked pixel. Diffusion inpainting is usually better for strict local repair. Use the smallest tool that solves the actual defect.

The Diagnostic Order: Structure, Light, Detail

Before changing any parameter, classify the failure.

If the subject is cropped, the horizon is wrong, the pose changed, the left and right people swapped identities, or a table has impossible perspective, you have a composition problem. Do not start by increasing steps or sharpening the image. Check the aspect ratio, canvas, mask scope, and structural references first.

If the object is in the right place but looks pasted on, the subject is too blue for a warm room, the shadow goes in the wrong direction, or the edited clothing fights the original lighting, you have a lighting problem. Lock the geometry, then repair main light direction, contact shadows, exposure, and color temperature.

If the image is structurally correct and the lighting mostly works, then repair details: face likeness, hands, hair, fabric, product edges, logos, halos, and texture. Detail work should usually be local. A full-image rerender to fix three fingers is a bad trade.

This order prevents the most common failure spiral: repairing skin on a face that is already the wrong person, sharpening an object that is in the wrong perspective, or relighting a subject that should have been re-composed first.

GPT Image 2 vs Diffusion I2I: What You Can Actually Control

For GPT Image 2, your main levers are:

| Control | Practical use | Common mistake |

|---|---|---|

| Prompt | Defines the edit goal and preservation rules | Asking for a broad redesign when you only need a local fix |

| Input image | Provides identity, layout, style, and context | Supplying a weak reference and expecting exact geometry |

| Mask | Guides where the model should edit | Treating it as a hard pixel boundary |

| Size / aspect ratio | Sets the composition container | Using a square canvas for a full-body vertical subject |

| Quality | Balances detail, cost, and latency | Using final quality for every debugging attempt |

| Multiple references | Helps with identity, object replacement, and style | Expecting a style reference to also enforce pose or perspective |

For diffusion image-to-image, the useful levers are more granular:

| Parameter | What it changes | Useful starting point |

|---|---|---|

strength / denoise | How much the input image is rewritten | Local repair: 0.15-0.35; lighting: 0.30-0.50; structure change: 0.50-0.75 |

CFG / guidance_scale | How strongly the model follows the prompt | Realistic edits: 4-6; general default: 6-8 |

steps | Denoising quality and runtime | Fast tests: 20-30; balanced: 30-50; difficult detail work: 50-80 |

seed | Reproducibility for A/B tests | Fix it during diagnosis |

sampler / scheduler | Denoising trajectory and failure mode | Pick one and hold it steady before comparing parameters |

| ControlNet scale | Strength of structure guidance | Soft: 0.4-0.6; strong: 0.6-0.8 |

| IP-Adapter scale | Strength of reference-image influence | Style: 0.4-0.6; identity or appearance: 0.6-0.8 |

Three rules keep parameter tuning sane.

First, steps do not reliably fix structure. They may improve texture and edges, but they will not consistently repair a wrong pose, bad horizon, or swapped subject relationship.

Second, CFG is not "quality." Too little guidance ignores the prompt. Too much guidance can make images oversaturated, brittle, or less natural. Raise it only when the model clearly ignores a specific instruction.

Third, do not test ten variables at once. During diagnosis, lock seed, size, sampler, and input. Change one major variable: mask scope, denoise, control map, reference image, or prompt constraint.

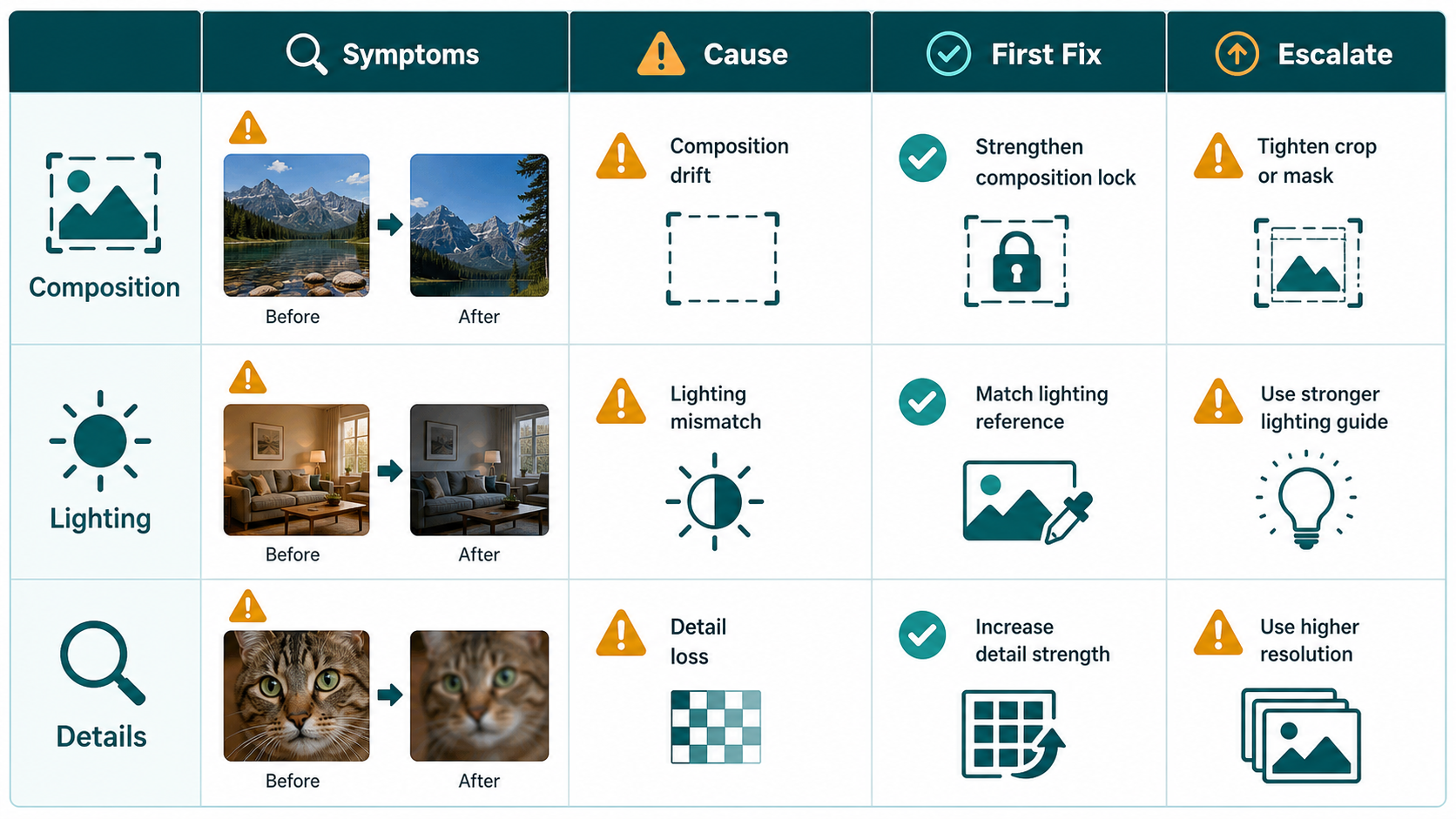

Common Failure Library and First Fixes

Use this issue library as a fast triage table.

| Symptom | Likely cause | Priority | First fix |

|---|---|---|---|

| Masked edit changes the face, background, or whole image | The mask is being treated as a suggestion, not a hard boundary; the prompt asks for too much | P0 | Crop a smaller region, narrow the edit goal, and write a strict preserve list. If pixels must remain untouched, use diffusion inpaint. |

| Subject is cropped, head missing, limbs out of frame | Wrong aspect ratio, tight canvas, missing "complete subject" instruction | P0 | Change size or outpaint first. Ask for full body, complete subject, natural margins. |

| Sketch-to-real output loses perspective | Semantic prompt without structural control; denoise too high | P0 | Use depth, canny, or lineart guidance. Lower denoise. Separate structure repair from material rendering. |

| Two people swap roles or share body parts | Prompt leakage across subjects; no region separation | P0 | Use separate subject descriptions, masks, regional prompting, or pose control. |

| Inserted object looks like a sticker | No contact shadow, wrong scale, mask excludes contact area | P0 | Repair the object base and shadow area, not only the object. Specify contact shadow direction and softness. |

| Output gets darker after repeated passes | Loopback or repeated low-denoise edits accumulate exposure drift | P1 | Stop looping. Do a separate exposure and white-balance pass. |

| Clothing replacement has wrong light direction | The garment reference has different lighting; prompt does not lock scene light | P1 | Preserve camera and background. Match clothing to original light direction, shadows, and color temperature. |

| Face no longer looks like the person | Face was included in a broad full-image render | P0 | Use face-only repair with identity reference and preserve expression, face shape, age, hair, and proportions. |

| Hands have wrong finger count or broken joints | Complex contact, weak pose constraint, or conflicting prompt | P0 | Mask only the hand and contact point. Use hand pose reference or openpose. Repair left and right hands separately. |

| Texture becomes blurry after upscaling | Upscaling and repainting were mixed in one high-denoise pass | P1 | Upscale first, then use low-denoise local repair. |

| White edge, halo, or fringing | Mask too tight; transparent-background expectation mismatch | P1 | Use an edge-ring mask that covers both sides of the boundary. For GPT Image 2, output opaque first and cut out downstream. |

P0 means the image cannot be delivered until fixed. P1 means the defect is visible and hurts quality. P2 defects are small enough to handle in the final polish pass.

Composition Troubleshooting

Composition problems are the most expensive to ignore. If the geometry is wrong, later fixes build on a bad base.

For cropped subjects, start with the canvas. A vertical full-body image needs a vertical frame. A product hero with room for labels may need horizontal space. If the original subject is already cut off, outpaint or expand the canvas before asking for a nicer render. In GPT Image 2, keep the prompt direct: "move the camera back 10 to 20 percent, complete the missing head and arms, preserve the same face, outfit, background, camera height, and light direction."

For perspective problems, add structure. In diffusion workflows, use depth for interiors, architecture, furniture, and spatial relationships. Use canny or lineart for products, logos, hard edges, diagrams, and sketch-to-render work. Use pose or keypoints for humans. Do not use openpose to preserve a product silhouette. Do not use canny and expect it to understand elbow direction.

For two-person scenes, separate the subjects in the prompt. "The person on the left" and "the person on the right" should have separate identity, clothing, pose, and action descriptions. If your tool supports masks, regional prompting, or segmentation, use it. Many multi-subject failures are not "bad hands"; they are bad region ownership.

Lighting Troubleshooting

Lighting failures are usually compositing failures. The edited object may be semantically correct, but it does not belong to the scene.

The four things to specify are main light direction, shadow behavior, color temperature, and exposure. "Make it realistic" is weak. "Match the existing warm left-side window light, add a soft contact shadow under the shoes, keep the background exposure unchanged, and preserve neutral skin tones" is useful.

When an object looks pasted on, do not repaint the whole object first. Repair the contact zone: feet on floor, product base on table, dog paws on grass, cup edge on counter, poster edge on wall. The mask should include the object boundary and the surface receiving the shadow. The prompt should mention contact shadow, occlusion shadow, reflection if relevant, and matching shadow softness.

If repeated edits make the image too yellow, too dark, or too contrasty, stop editing the content. Run one separate color pass. Ask for unified white balance and exposure while preserving composition, identity, material, and texture. Avoid combining "replace the jacket" and "fix the entire color grade" in the same pass unless you are prepared for drift.

Detail Troubleshooting

Details should be repaired after structure and lighting are stable.

Faces need small masks and identity constraints. Mask the whole face plus a little surrounding context: hairline, chin, ears, and adjacent skin. Do not mask only one eye unless you want asymmetry. Tell the model to preserve exact likeness, face shape, age, expression, hairstyle, skin tone, and camera angle. Ask for natural skin texture, not plastic smoothing.

Hands need context too. Mask the palm, fingers, wrist, object contact area, and a bit of background. Preserve the gesture intention and the object position. If both hands are wrong, repair them separately. For complex hand-object interactions, a pose or hand reference is worth more than a longer negative prompt.

Edges need an edge-ring mask. If a product has haloing, the mask must cover the boundary inside and outside the product edge. A mask that only covers the object interior will not fix the transition. For GPT Image 2 workflows, it is often cleaner to generate or edit on an opaque background first, then remove the background in a downstream step.

Texture needs a two-step workflow. First upscale or use super-resolution. Then repaint only the weak texture area at low denoise or with a narrow edit prompt. If you combine high-denoise repainting with upscaling, you often get larger blur, not better detail.

Copy-Paste Prompt Templates

Use these as structured prompts. For GPT Image 2, paste the whole template and fill the brackets. For diffusion, move "do not" clauses into the negative prompt when useful.

1. Fix Cropping and Missing Body Parts

Task: Recompose the input image so the subject is fully visible while preserving the original identity, clothing, material, background style, camera height, and time of day.

Preserve: face, hairstyle, body proportions, clothing colors, background layout, light direction.

Change: move the camera back by about 10 to 20 percent, complete the missing head, arms, hands, legs, and feet, and leave natural margins around the subject.

Composition: keep the original perspective and subject direction. Do not mirror the image or change left-right relationships.

Do not: add people, change the background, change the expression, change color temperature, or change exposure.Diffusion start: denoise 0.30-0.50. Add depth guidance if the room or architecture is unstable.

2. Correct Perspective and Proportions

Task: Correct perspective and proportion errors in the input image.

Preserve: subject identity, scene content, materials, lighting, and the main camera angle.

Change: make vertical lines vertical, stabilize the horizon, align floor/table/building vanishing lines, and correct stretched or compressed shapes.

Composition: keep the existing subject relationships. Do not redesign the scene.

Do not: add new elements, change light direction, or change the person or product identity.Diffusion start: depth 0.7-0.9 for interiors or architecture; canny/lineart 0.5-0.8 for products and drawings; denoise 0.20-0.40.

3. Lock Two Subjects and Their Left-Right Relationship

Task: Fix the two-subject pose and left-right relationship.

Left subject: keep as [Character A], preserving hairstyle, face shape, skin tone, clothing, and facing direction.

Right subject: keep as [Character B], preserving hairstyle, face shape, skin tone, clothing, and facing direction.

Pose: left subject performs [Action A], right subject performs [Action B]. Do not swap positions. Do not share hands or gestures between them.

Composition: keep the camera angle and scene unchanged.

Do not: create extra arms, extra fingers, wrong left/right hands, mixed identity, or mixed skin tone.Use pose control, segmentation, or regional prompting when available.

4. Match Light Direction

Task: Fix lighting consistency only.

Preserve: subject identity, background, camera position, composition, action, and materials.

Change: make the main light come from [upper left / upper right / side / back]. Align highlights, midtones, shadows, and cast shadows with that light direction.

Shadows: create natural contact shadows and ambient shadows with softness matching the scene.

Do not: change the pose, background, color temperature, or white balance.Diffusion start: denoise 0.25-0.45. For shadow-only fixes, mask only the shadow and contact area.

5. Remove Sticker-Like Object Placement

Task: Make [person/object/animal] belong naturally in the scene instead of looking pasted on.

Preserve: the subject appearance and every unmasked region.

Change: add realistic contact shadow, subtle occlusion shadow, and necessary reflection or bounce light around the contact point.

Spatial relationship: match shadow direction and shadow density to the existing floor, wall, table, or ground material.

Do not: change subject shape, background layout, or subject color.If there are several contact points, repair them in small separate passes.

6. Unify Exposure and Color Temperature

Task: unify exposure and color temperature so the image looks captured by one camera at one moment.

Preserve: composition, subject identity, background, material, and texture.

Change: restore natural white balance, prevent blown highlights, keep shadows readable, and make skin tones natural. Overall color temperature should be [warm sunset / neutral daylight / cool overcast].

Do not: change scene content, add a filter look, or apply heavy cinematic grading.Do this as its own pass. Do not combine it with a large structure edit.

7. Repair Face Details

Task: repair facial details only.

Preserve: exact likeness, face shape, age, expression, hairstyle, skin tone, and camera angle.

Change: fix eye symmetry, pupil direction, eyelashes, nostrils, lip edges, teeth, ears, and natural skin texture.

Quality: realistic photographic detail, no over-smoothing, no cartoon style.

Do not: change expression, change facial proportions, affect hair, or affect the background.Mask the full face with a little surrounding context. Upscale first if the face is tiny.

8. Repair Hands

Task: repair hand structure only.

Preserve: gesture intention, left-right hand relationship, contact position with objects, subject identity, and background.

Change: make each hand have a natural number of fingers, correct joint bends, reasonable palm direction, and natural fingertip contact.

Detail: restore knuckles, nails, palm creases, and shadows without exaggeration.

Do not: add hands, swap left and right hands, or move the held object.Repair left and right hands separately if both are broken.

9. Clean Texture and Edge Artifacts

Task: clean edge artifacts and restore realistic texture.

Preserve: subject shape, label text, color, and overall composition.

Change: remove white edges, halos, fringing, jagged borders, and blurry edges. Restore clear [hair/fabric/leather/product surface] texture and natural micro-contrast.

Background: keep the edge transition natural with no new glow.

Do not: redesign the subject, change text, or change background color.Use an edge-ring mask. For product cutouts, edit on opaque first, then remove the background downstream.

Strategy: Inpaint, Control, or Rerender?

Local inpaint is the default for small defects. It has the lowest drift and usually protects identity and background best. Use it for faces, hands, edges, contact shadows, and small texture failures.

Crop-first inpaint is even better for tiny defects. Crop the problem area, repair it at higher apparent resolution, then place it back into the full image. This is useful for eyes, fingers, product edges, and labels.

Full-image masked edit is useful for semantic changes such as outfit replacement, object insertion, or broad style changes. It is not a guarantee that unmasked pixels will remain untouched, especially in GPT Image 2. Use it when some drift is acceptable.

Full rerender is for broken structure. If the original layout is wrong, rerendering may be cleaner than fighting many local patches. Accept that identity, light, and detail may need follow-up repairs.

Control images solve structural problems. Canny and lineart preserve edges. Depth preserves space and perspective. Pose preserves human joint relationships. Segmentation and regional prompting reduce subject mixing. IP-Adapter and reference images preserve identity, product appearance, or style, but they do not replace structural controls.

The blunt distinction is this: local inpaint fixes defects; rerendering redesigns the image. Do not use one when you need the other.

Quick Troubleshooting Checklist

- Subject cropped or limbs out of frame: change aspect ratio or expand canvas first.

- Perspective wrong: use depth, canny, or lineart before raising steps.

- Two people mixed together: split the subjects by region, mask, or prompt structure.

- Mask spills outside the intended area: crop smaller and narrow the prompt; switch to diffusion inpaint if hard pixel preservation matters.

- Image gets darker after repeated edits: stop loopback and run one exposure pass.

- Object looks pasted on: repair contact shadow and surface interaction.

- Color temperature drifts: do one white-balance pass with a specific target such as neutral daylight or warm sunset.

- Face likeness drifts: use face-only repair with identity reference and strict preservation instructions.

- Hands break: small mask, hand reference or pose, one hand at a time.

- Texture blurs: upscale first, then low-denoise local repair.

- Edge halo appears: use an edge-ring mask, not an object-interior mask.

- Debugging feels random: lock seed, size, sampler, and input; change one variable only.

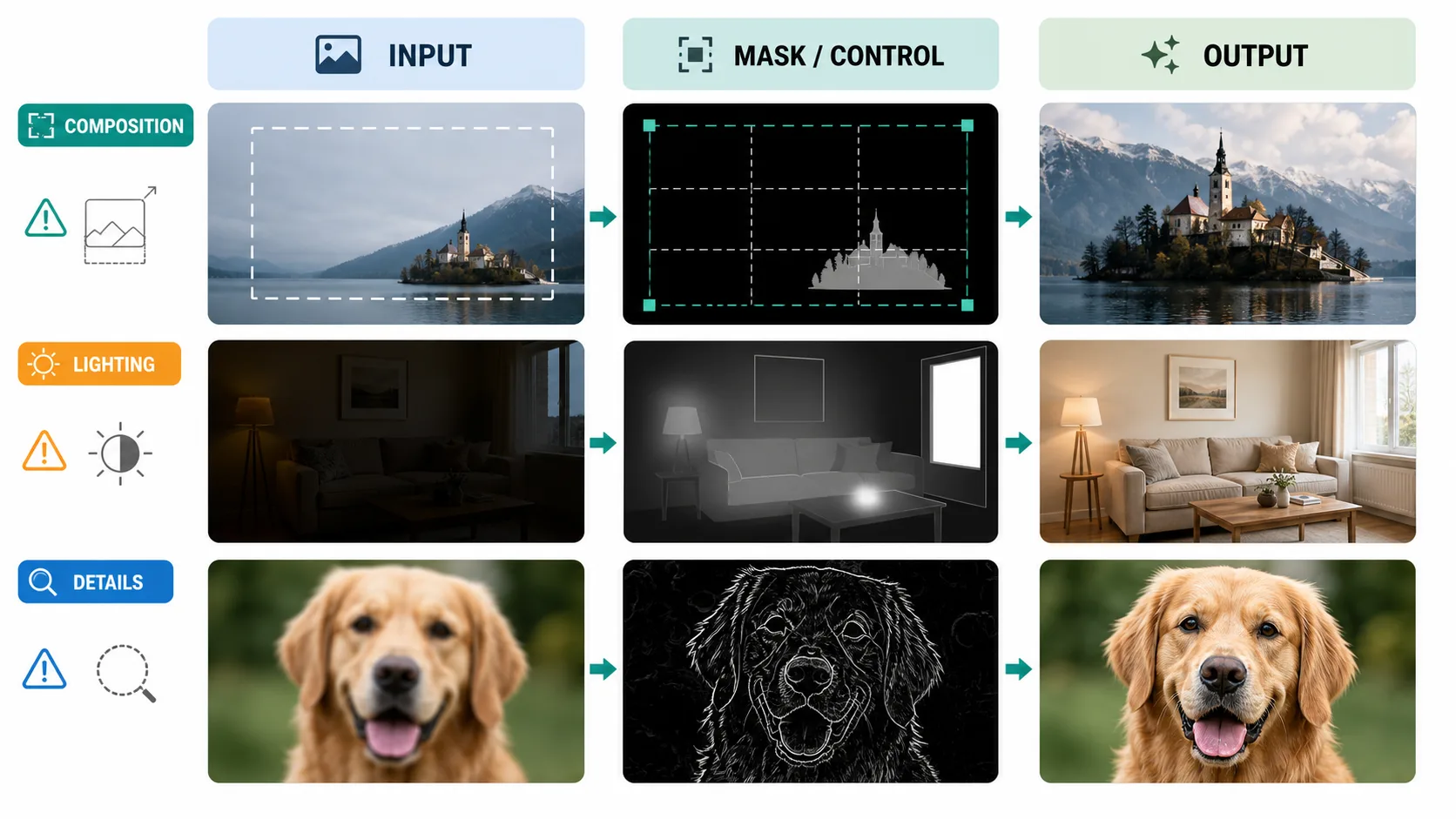

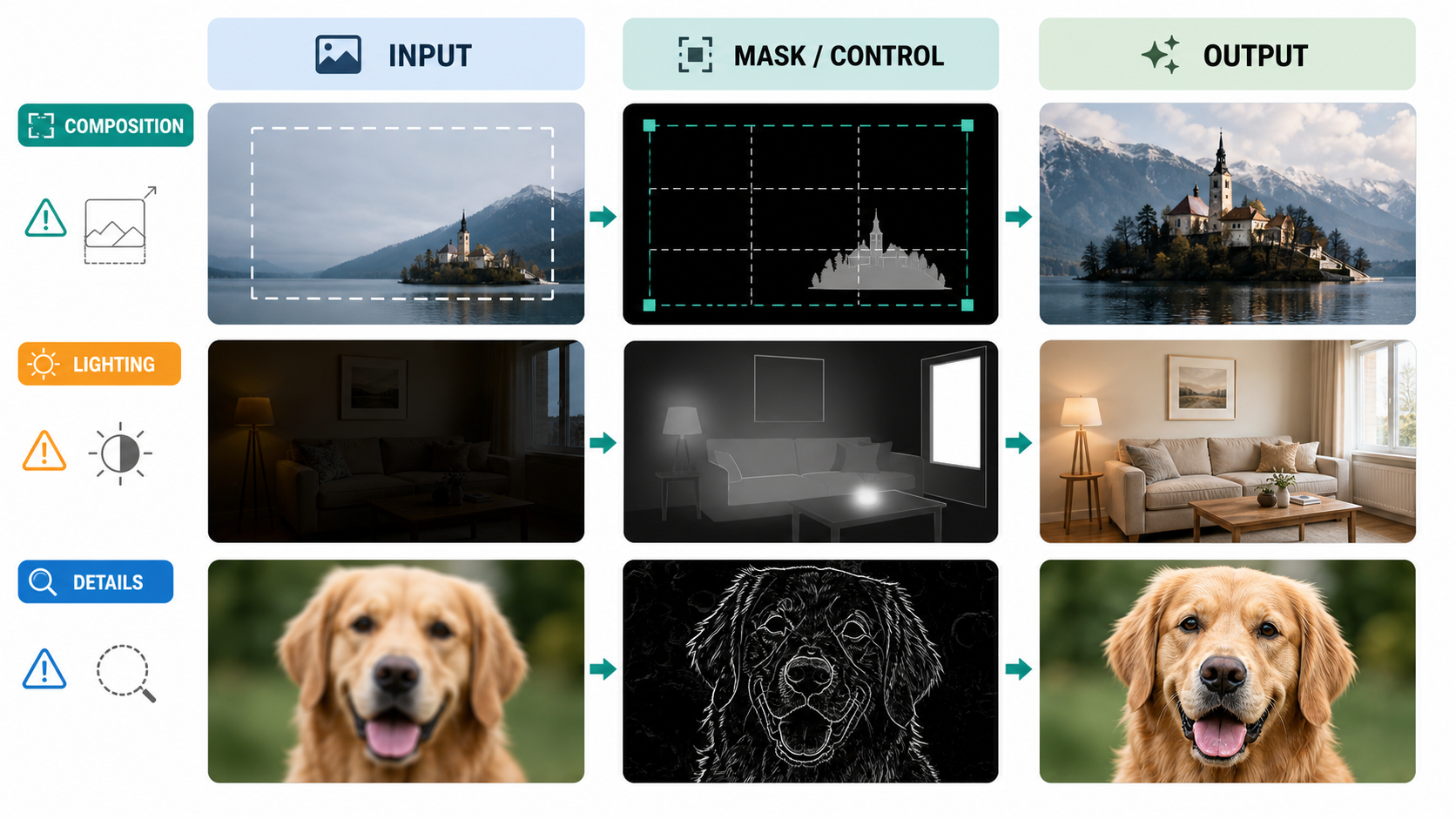

Recommended Before/After Layout for Your Blog or Team Review

The cleanest presentation is a three-panel comparison:

Input | Mask or Control Map | Output

For detail fixes, add a second row with 200 percent close-ups. For team review, add a small parameter footer: model, size, quality, denoise, CFG, steps, sampler, scheduler, seed, control scale, and reference scale. This makes diagnosis repeatable instead of dependent on memory.

Final Takeaway

Most image-to-image failures are not mysterious. Composition errors need canvas and structure control. Lighting errors need compositing language: light direction, contact shadow, exposure, and color temperature. Detail errors need small masks, references, and conservative repair.

With GPT Image 2, the winning move is usually a clear edit goal, a narrow scope, useful references, and explicit preservation rules. With diffusion workflows, add reproducible parameter testing and structural controls. In both cases, fix the base before polishing the surface.